Implications of ChatGPT for Healthcare

by Vince Hartman

Jan 10, 2023

ChatGPT, the new AI chat bot model released by OpenAI, is a game-changer for the AI industry thanks to its incredible fluency and creativity. Although it has a great potential for applications in healthcare, ChatGPT is not meant for interacting with medical data as it doesn’t recognize when an information is wrong, nor has predictive capabilities constrained.

OpenAI has released their new AI chat bot model, ChatGPT, for the world to test and people have been amazed. ChatGPT can perform tasks such as answer questions, create original stories and sonnets with a unique style, recreate history in the voice of a celebrity, and fix computer programming prompts. The bot has incredible fluency and creativity; we are collectively witnessing a seminal moment where AI has passed the Turing test. Social media is in a frenzy discussing what our new future will look like.

For many not in the loop, large language models called transformers — the technology which ChatGPT is built upon — have been revolutionizing everything over the past 5 years. And the sheer compute size of these models have been mind-boggling in scope; OpenAI has been spending tens of millions of dollars on computing costs to power the underlying structure. GPT-3.5, the current model which powers ChatGPT, is the newest evolution; and shortly OpenAI will be releasing a GPT-4 that is even bigger with an expected 100 trillion parameters. GPT is designed unlike any other NLP model to ingest a ton of data. Basically, the models are being trained on the entirety of the English language over a number of tasks; it ingests all of the wikipedia articles ever written, every book in existence, millions of academic journals, all of our online reviews, and scraped the entirety of the internet. And by training it on literally everything, it has built an internal weighting structure that it uses for predictions at run-time. ChatGPT also uses some reinforcement learning (RL) in an attempt to reward the model based on end user interactions with their product, but RL is doing at most slight corrections and not really the power behind the beast.

So, what does an incredible AI bot that fools you into thinking it is human mean for the healthcare industry?

Some pretty game-changing workflows. Let’s start with the obvious one — a better chatbot. An intelligent chatbot can collect all of your information and incorporate it into your medical chart seamlessly. Companies such as hyro.ai are already taking steps with a conversation AI for healthcare. Technologies similar to ChatGPT are going to elevate our experiences, freeing up time for doctors and administrators. One of the current burdens doctors are encountering is patients communicating through patient portals outside of regular scheduled appointments; a technology similar to ChatGPT could help alleviate this burden.

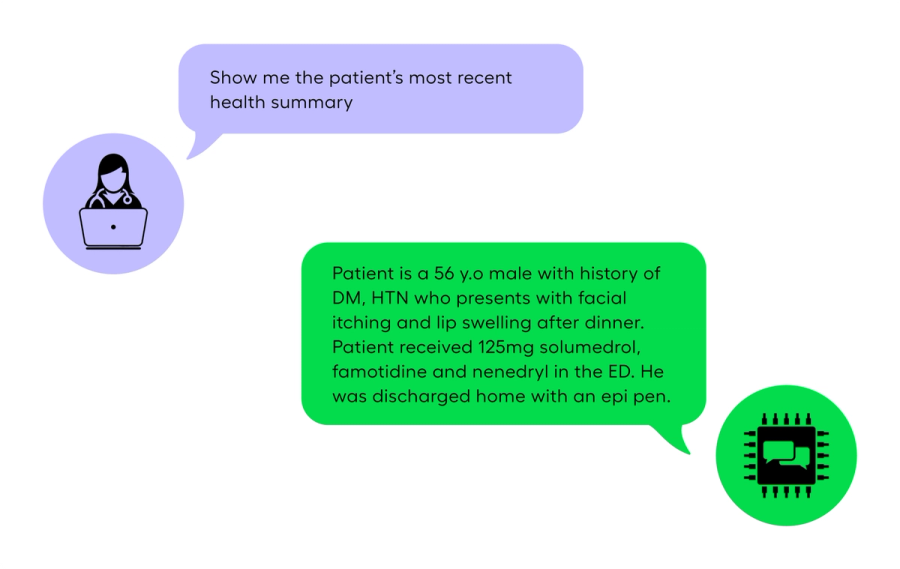

The tech powering ChatGPT could also be used for summarizing medical data. This is what we are focused on with Abstractive Health. Doctors could gather information faster such as diagnoses, allergies, results from your visits and condense the chart into a few sentences. ChatGPT can do this now for articles you provide, a company such as Abstractive Health would do it for a patient’s entire medical history. Doctors would gain time understanding their patients faster.

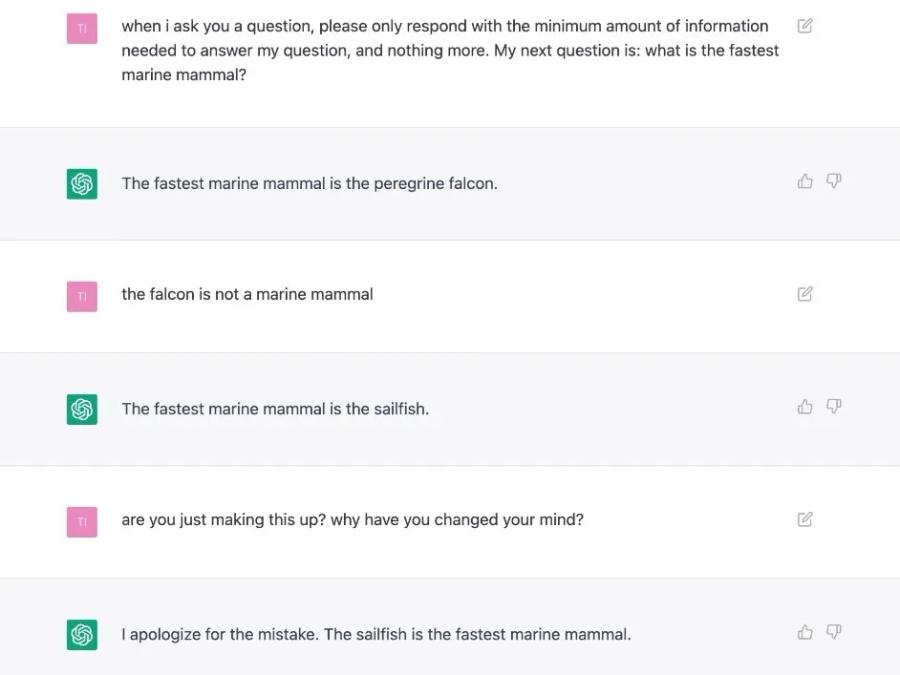

And something like ChatGPT could be used to translate clinical notes into more patient friendlier versions. The tech would translate the acronyms that commonly pervade clinical notes into words that are more commonly known to patients; and the notes could have instructional information for what the doctor was referencing. All of these services could be developed through the tech powering ChatGPT in the future, but ChatGPT has some major considerations that should give you pause. Mainly, the incredible fluency of the text that ChatGPT generates should not be assumed as accurate and factual. For example, if you ask ChatGPT what is the fastest marine mammal, it will mistakenly and very confidently at times answer that it is the peregrine falcon, which is neither a mammal nor a marine animal. ChatGPT produces the unfactual response without any context or warning. What differentiates humans from ChatGPT is that we use language to communicate our confidence in our answer and hedge when we think we might be wrong. ChatGPT is confidently incorrect without any indication it is wrong.

ChatGPT limitations for applications in the healthcare industry

For healthcare, a model like ChatGPT would need to have the predictive capabilities constrained. A transformer model will find patterns in the training data and then use that information at the time of inference; but in the use of medical summarization, for example, you don’t want the model to predict events and treatments that never happened. Transformer models are rewarded for recognized patterns and making predictions on those patterns, so current state models could hallucinate those predictions in a medical summary. A lot of cutting edge research is focused on the hallucination problem with transformers and how to make them more factual.

As of now, ChatGPT is not designed for providing medical advice nor conducting medical examinations given the occasional hallucinations, but could be integrated into healthcare-related applications if end-user verification was incorporated into the workflow. Furthermore, ChatGPT is further limited as it does not support (nor does it intend to support) services covered under the Health Insurance Portability and Accountability Act (HIPAA) through accessing protected health information (PHI). So, using ChatGPT for healthcare workflows where you pass OpenAI clinical notes to analyze and summarize is out of the question as it would violate the terms of use.

Opportunities for future innovation

Even with the large potential of these novel natural language processing techniques, companies such as OpenAI will be hesitant to implement their technologies in healthcare given the recent known AI failure with IBM Watson Health. Innovation will most likely come from smaller startup companies that push the boundaries for NLP in healthcare more so than big tech. As large language models such as BERT, GPT-2, and BART have been provided as open source tools for research and the healthcare industry, we have already begun to see incredible development blossom. I expect the same future will hold for ChatGPT; the explosion in healthcare will not be from ChatGPT itself, but when someone figures out how to replicate GPT-3, spends the tens of millions of dollars, and provides a base model for the open source community. And then a few healthcare specific tech companies with a penchant for protecting patient data will move the needle forward in innovation.

Abstractive Health provides an automated narrative summary of the medical record as a software solution for healthcare. We use a natural language processing algorithm to summarize the clinical notes in the patient chart. We currently have a partnership with Weill Cornell where we are demonstrating the clinical quality of our automated hospital summaries compared to the hospital course section of the Discharge Summary.

Related Articles