Transformers Are a Game Changer for Healthcare

by Vince Hartman

Jan 9, 2023

Transformers are a machine learning sequence-to-sequence model developed by Google in 2017. They can accurately predict deep relationships within sentences and successfully perform tasks such as document classification, summarization and question and answering. Transformers are crucial for creating a narrative summary in healthcare as they have very high accuracy levels and are easy to maintain when put into production.

Natural Language Processing (NLP) has been a progression of ever improving models: from bag of words (1954), to TDIF (1972), to RNNs and LSTMs (1997), and finally to Transformers (2017). Many don’t realize that 20 years ago NLP models were already at 85%+ accuracy at automating medical codes for patient encounters using text classification and Naive Bayes. Customer service automation through a chatbot has been in healthcare even longer. These early models had impressive use cases and accuracy. And 10 years ago, LSTMs, which are ideal for time series and sequential data like language, could be used to predict diagnoses such as sepsis and other diseases from clinical notes. Most of the NLP commercial healthcare market today does not use Transformers. Where Transformers have excelled though is matching (or even beating) human performance at accuracy levels of 99%+. For example, medical coders in radiology can be completely automated except for a small exception pool. And NLP tasks such as machine translation and summarization are now possible in healthcare. Transformers make it possible to translate obtuse doctor language to a patient-friendlier version.

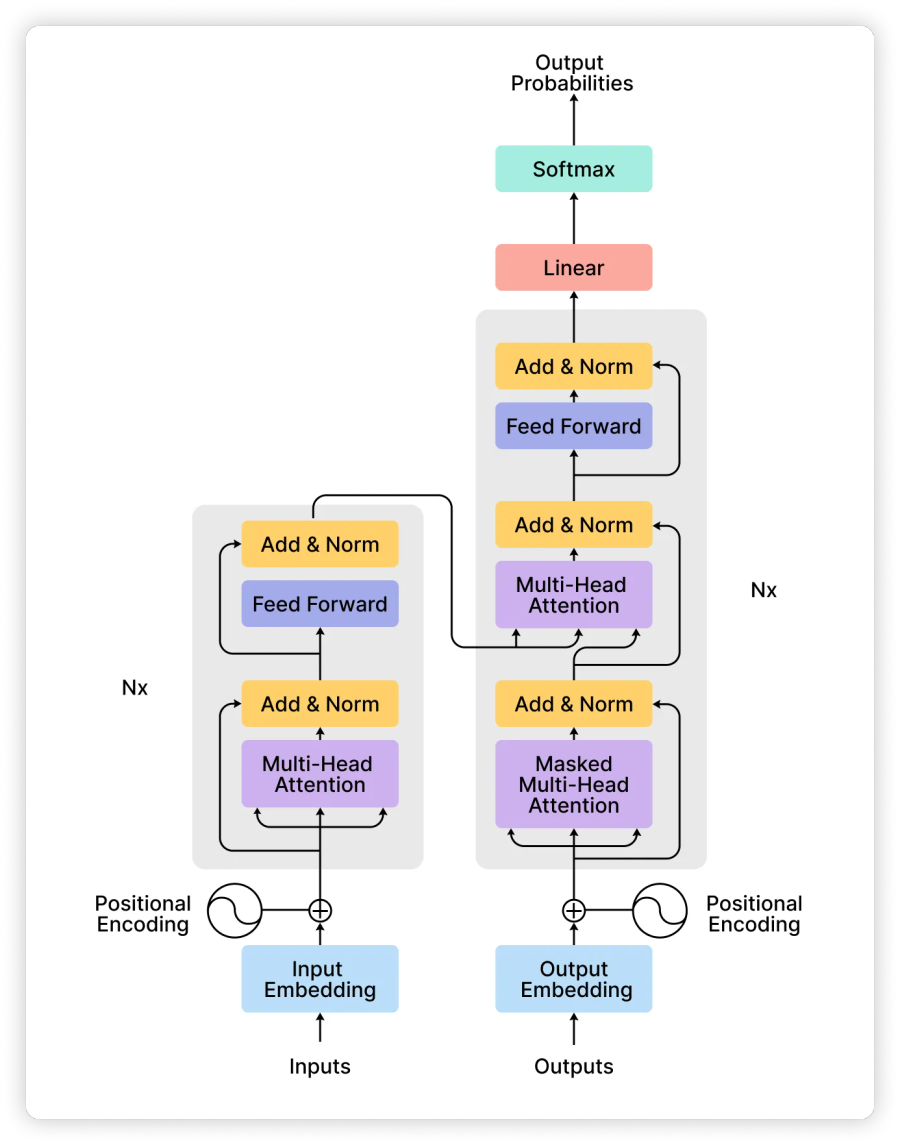

So what are transformers?

Transformers are a machine learning sequence model that was developed by Google in 2017 and uses a mathematical mechanism called attention to determine the weighting of word vectors. Attention is just a fancy way of saying transformers rely on a lot of matrix multiplications. Matrix multiplication works nicely for parallel computations because it takes advantage of GPUs. Before transformers, LSTMs were all the rage in NLP but depended on each word in a sequence: meaning LSTMs can’t be trained in parallel. LSTMs could never scale to a training data size with millions of records and billions of parameters that we have recently experienced with transformers such as GPT-3. Transformers also take into account an entire sequence at once while LSTMs are state dependent; the earlier portions of a sequence are virtually lost by the end of a long sequence. Since Transformers take into account the weighting of every word against every other word at once for prediction (autoregressive), they are actually computationally worse in time (Big O notation of O(N2)) than LSTMs, but parallelization with GPUs allows them to actually run and finish faster.

The impact of transformers in document summarization for healthcare

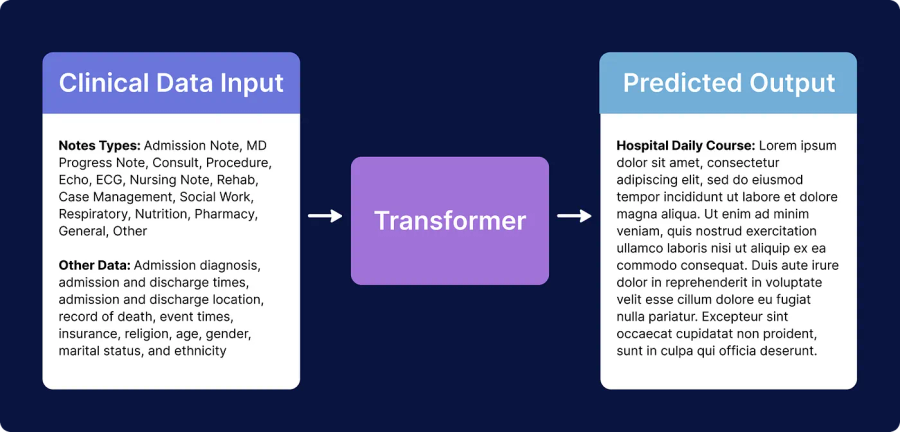

What this all means is that transformers are able to predict deeper relationships within sentences and perform better at NLP tasks such as document classification, sentiment analysis, machine translation, question and answering, and summarization. And in healthcare, that means better diagnostic accuracy prediction, more automation of rote tasks such as medical coding and computer data entry, and precision medicine (designing a medication plan for each patient). Before transformers, the best structure for building a patient summary of their health record would be through a rule-based branching logic with a combination of keyword extraction through a graph-based algorithm such as TextRank. These early models had low fluency and were extremely difficult to maintain when put into production. Hence why true automated narrative summaries in healthcare have been non-existent; if a doctor wanted to read a good summary of the medical chart, they relied on another doctor to comb through the chart and do so manually for them. Transformers are finally good enough as a machine learning model to solve a problem in healthcare such as document summarization.

For this reason, our NLP at Abstractive Health is based on transformers. We are experts at using these models and have spent a lot of time modifying them to work specifically for the challenges in healthcare such as for long documents and medical factuality. At the core, we believe that Transformers is the new game-changing AI that is already revolutionizing healthcare.

Abstractive Health provides an automated narrative summary of the medical record as a software solution for healthcare. We use a natural language processing algorithm to summarize the clinical notes in the patient chart.

Related Articles